flan-t5-xl

Maintainer: replicate

132

| Property | Value |

|---|---|

| Model Link | View on Replicate |

| API Spec | View on Replicate |

| Github Link | View on Github |

| Paper Link | View on Arxiv |

Get summaries of the top AI models delivered straight to your inbox:

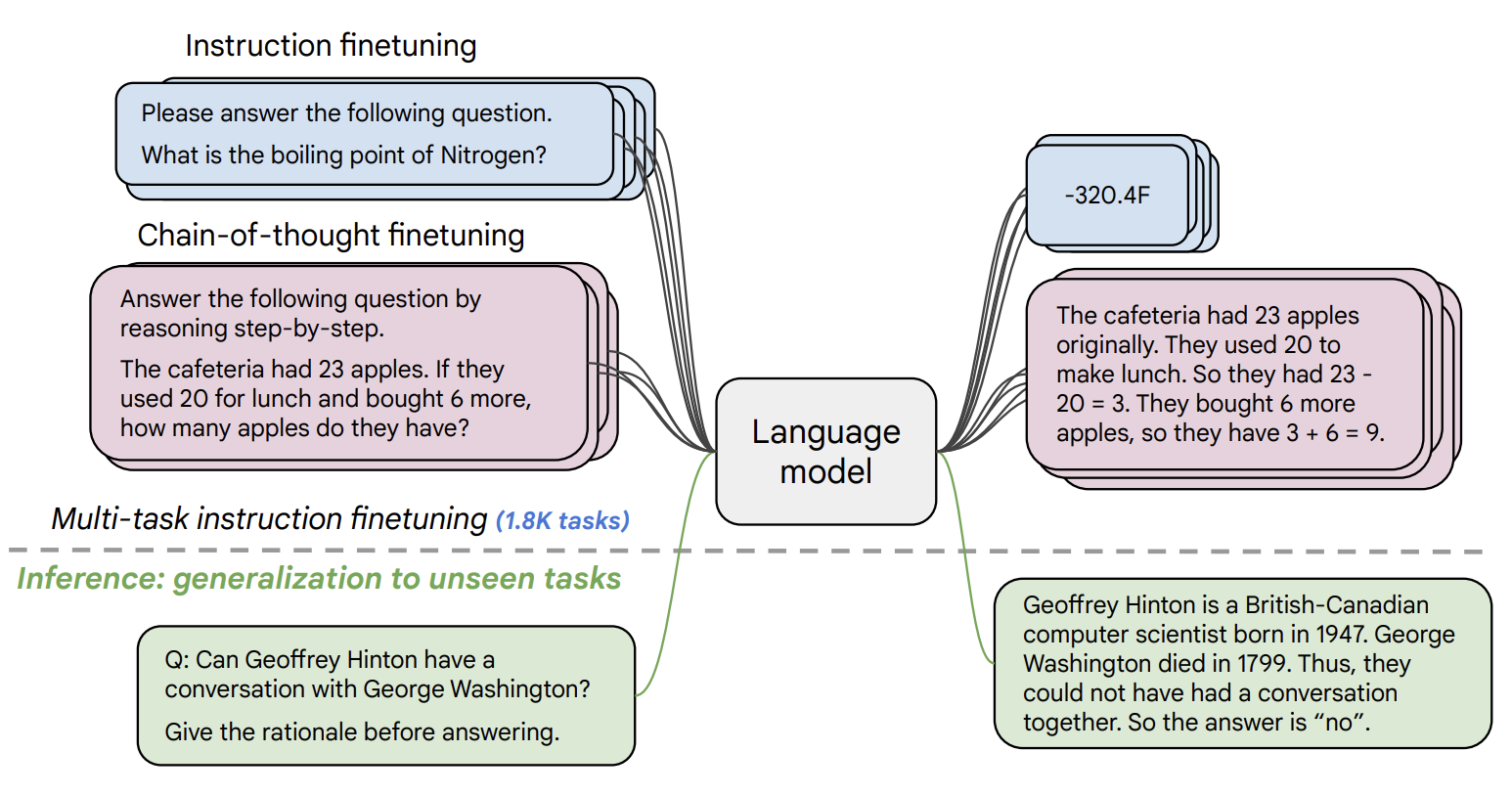

Model overview

flan-t5-xl is a large language model developed by Google that is based on the T5 model architecture. It is a "FLAN" (Finetuned Language Model) model, meaning it has been fine-tuned on a diverse set of over 1,000 tasks and datasets to improve its performance on a wide range of language understanding and generation tasks. The flan-t5-xl model is the extra-large variant, with more parameters than the standard T5 model.

Similar models include the smaller ,[object Object], model and the even larger FLAN-T5-XXL model. There is also the multilingual ,[object Object], model which is designed for multi-language tasks.

Model inputs and outputs

The flan-t5-xl model takes in text prompts as input and generates text outputs. The model can be used for a variety of natural language processing tasks such as classification, summarization, translation, and more.

Inputs

- prompt: The text prompt to send to the FLAN-T5 model

Outputs

- generated text: The text generated by the model in response to the input prompt

Capabilities

flan-t5-xl is a highly capable language model that can perform a wide range of NLP tasks. It has been fine-tuned on over 1,000 different tasks and datasets, giving it broad competence. The model can excel at tasks like summarization, translation, question answering, and open-ended text generation.

What can I use it for?

The flan-t5-xl model could be used for a variety of applications that require natural language processing, such as:

- Content generation: Use the model to generate human-like text for things like product descriptions, marketing copy, or creative writing.

- Summarization: Leverage the model's summarization capabilities to automatically generate concise summaries of long documents or articles.

- Translation: Fine-tune the model on translation data to create a multilingual language model that can translate between various languages.

- Question answering: Use the model to build chatbots or virtual assistants that can understand and respond to user questions.

Things to try

One interesting aspect of the flan-t5-xl model is its strong few-shot learning performance. This means that it can often achieve good results on new tasks with just a handful of training examples, without requiring extensive fine-tuning. Experimenting with different prompting techniques and few-shot learning setups could yield some surprising and novel applications for the model.

Another intriguing area to explore would be using the flan-t5-xl model in a multi-modal setting, combining its language understanding capabilities with visual or other modalities. This could unlock new ways of interacting with and reasoning about the world.

This summary was produced with help from an AI and may contain inaccuracies - check out the links to read the original source documents!

Related Models

flan-t5-large

1

flan-t5-large is a language model developed by Google that can be used for a variety of natural language processing tasks such as classification, summarization, and more. It is part of the FLAN-T5 family of models, which are fine-tuned versions of the original T5 model for improved performance on a wide range of tasks and languages. The flan-t5-large model is larger than the base T5 model, with more parameters, allowing it to tackle more complex language challenges. It has been fine-tuned on over 1,000 additional tasks compared to the original T5, covering a diverse set of languages including English, Spanish, Japanese, Hindi, French, and many others. This increased task coverage and language support makes flan-t5-large a powerful and versatile model. The model is based on the Transformer architecture and can be used for both generation and classification tasks. It is publicly available through the Hugging Face Transformers library, allowing easy integration into a variety of projects and applications. Model inputs and outputs Inputs prompt**: The text prompt that the model will use to generate output. max_length**: The maximum number of tokens to generate in the output. temperature**: A value between 0 and 5 that controls the randomness of the output. Higher values result in more diverse but less coherent text. top_p**: The percentage of the most likely tokens to consider during generation. Lower values ignore less likely tokens. repetition_penalty**: A value greater than 1 that discourages the model from repeating words, while a value less than 1 encourages repetition. debug**: A boolean flag to enable additional debugging output. Outputs Output**: An array of strings representing the generated text output from the model. Capabilities The flan-t5-large model is capable of tackling a wide range of natural language processing tasks, including text classification, summarization, translation, and question answering. Its strong few-shot performance even compared to much larger models makes it a powerful and versatile tool for researchers and developers. What can I use it for? The broad capabilities of flan-t5-large make it suitable for a variety of applications, such as: Content generation**: Generating human-like text for chatbots, creative writing, or other applications that require natural language output. Text summarization**: Condensing long passages of text into concise summaries. Language translation**: Translating text between the 50+ supported languages. Question answering**: Answering questions by extracting relevant information from given context. Text classification**: Categorizing text into different topics or sentiment. Additionally, the model can be further fine-tuned on domain-specific datasets to adapt it for more specialized use cases. Things to try With the flexibility and broad capabilities of flan-t5-large, there are many interesting experiments and projects one could explore. Some ideas include: Zero-shot and few-shot learning**: Leveraging the model's strong few-shot performance to tackle new tasks with limited training data. Multilingual applications**: Utilizing the model's support for over 50 languages to build cross-lingual applications. Bias and fairness analysis**: Studying the model's potential biases and exploring ways to improve its fairness and safety. Novel task generation**: Developing new benchmarks and tasks to push the boundaries of language model capabilities. The possibilities are vast, and the flan-t5-large model provides a powerful foundation for a wide range of natural language processing research and applications.

Updated Invalid Date

stable-diffusion

107.9K

Stable Diffusion is a latent text-to-image diffusion model capable of generating photo-realistic images given any text input. Developed by Stability AI, it is an impressive AI model that can create stunning visuals from simple text prompts. The model has several versions, with each newer version being trained for longer and producing higher-quality images than the previous ones. The main advantage of Stable Diffusion is its ability to generate highly detailed and realistic images from a wide range of textual descriptions. This makes it a powerful tool for creative applications, allowing users to visualize their ideas and concepts in a photorealistic way. The model has been trained on a large and diverse dataset, enabling it to handle a broad spectrum of subjects and styles. Model inputs and outputs Inputs Prompt**: The text prompt that describes the desired image. This can be a simple description or a more detailed, creative prompt. Seed**: An optional random seed value to control the randomness of the image generation process. Width and Height**: The desired dimensions of the generated image, which must be multiples of 64. Scheduler**: The algorithm used to generate the image, with options like DPMSolverMultistep. Num Outputs**: The number of images to generate (up to 4). Guidance Scale**: The scale for classifier-free guidance, which controls the trade-off between image quality and faithfulness to the input prompt. Negative Prompt**: Text that specifies things the model should avoid including in the generated image. Num Inference Steps**: The number of denoising steps to perform during the image generation process. Outputs Array of image URLs**: The generated images are returned as an array of URLs pointing to the created images. Capabilities Stable Diffusion is capable of generating a wide variety of photorealistic images from text prompts. It can create images of people, animals, landscapes, architecture, and more, with a high level of detail and accuracy. The model is particularly skilled at rendering complex scenes and capturing the essence of the input prompt. One of the key strengths of Stable Diffusion is its ability to handle diverse prompts, from simple descriptions to more creative and imaginative ideas. The model can generate images of fantastical creatures, surreal landscapes, and even abstract concepts with impressive results. What can I use it for? Stable Diffusion can be used for a variety of creative applications, such as: Visualizing ideas and concepts for art, design, or storytelling Generating images for use in marketing, advertising, or social media Aiding in the development of games, movies, or other visual media Exploring and experimenting with new ideas and artistic styles The model's versatility and high-quality output make it a valuable tool for anyone looking to bring their ideas to life through visual art. By combining the power of AI with human creativity, Stable Diffusion opens up new possibilities for visual expression and innovation. Things to try One interesting aspect of Stable Diffusion is its ability to generate images with a high level of detail and realism. Users can experiment with prompts that combine specific elements, such as "a steam-powered robot exploring a lush, alien jungle," to see how the model handles complex and imaginative scenes. Additionally, the model's support for different image sizes and resolutions allows users to explore the limits of its capabilities. By generating images at various scales, users can see how the model handles the level of detail and complexity required for different use cases, such as high-resolution artwork or smaller social media graphics. Overall, Stable Diffusion is a powerful and versatile AI model that offers endless possibilities for creative expression and exploration. By experimenting with different prompts, settings, and output formats, users can unlock the full potential of this cutting-edge text-to-image technology.

Updated Invalid Date

gpt-j-6b

8

gpt-j-6b is a large language model developed by EleutherAI, a non-profit AI research group. It is a fine-tunable model that can be adapted for a variety of natural language processing tasks. Compared to similar models like stable-diffusion, flan-t5-xl, and llava-13b, gpt-j-6b is specifically designed for text generation and language understanding. Model inputs and outputs The gpt-j-6b model takes a text prompt as input and generates a completion in the form of more text. The model can be fine-tuned on a specific dataset, allowing it to adapt to various tasks like question answering, summarization, and creative writing. Inputs Prompt**: The initial text that the model will use to generate a completion. Outputs Completion**: The text generated by the model based on the input prompt. Capabilities gpt-j-6b is capable of generating human-like text across a wide range of domains, from creative writing to task-oriented dialog. It can be used for tasks like summarization, translation, and open-ended question answering. The model's performance can be further improved through fine-tuning on specific datasets. What can I use it for? The gpt-j-6b model can be used for a variety of applications, such as: Content Generation**: Generating high-quality text for articles, stories, scripts, and more. Chatbots and Virtual Assistants**: Building conversational AI systems that can engage in natural dialogue. Question Answering**: Answering open-ended questions by retrieving and synthesizing relevant information. Summarization**: Condensing long-form text into concise summaries. These capabilities make gpt-j-6b a versatile tool for businesses, researchers, and developers looking to leverage advanced natural language processing in their projects. Things to try One interesting aspect of gpt-j-6b is its ability to perform few-shot learning, where the model can quickly adapt to a new task or domain with only a small amount of fine-tuning data. This makes it a powerful tool for rapid prototyping and experimentation. You could try fine-tuning the model on your own dataset to see how it performs on a specific task or application.

Updated Invalid Date

llama-13b-lora

5

llama-13b-lora is a Transformers implementation of the LLaMA 13B language model, created by Replicate. It is a 13 billion parameter language model, similar to other LLaMA models like llama-7b, llama-2-13b, and llama-2-7b. Additionally, there are tuned versions of the LLaMA model for code completion, such as codellama-13b and codellama-13b-instruct. Model inputs and outputs llama-13b-lora takes a text prompt as input and generates text as output. The model can be configured with various parameters to adjust the randomness, length, and repetition of the generated text. Inputs Prompt**: The text prompt to send to the Llama model. Max Length**: The maximum number of tokens (generally 2-3 per word) to generate. Temperature**: Adjusts the randomness of the outputs, with higher values being more random and lower values being more deterministic. Top P**: Samples from the top p percentage of most likely tokens when decoding text, allowing the model to ignore less likely tokens. Repetition Penalty**: Adjusts the penalty for repeated words in the generated text, with values greater than 1 discouraging repetition and values less than 1 encouraging it. Debug**: Provides debugging output in the logs. Outputs An array of generated text outputs. Capabilities llama-13b-lora is a large language model capable of generating human-like text on a wide range of topics. It can be used for tasks such as language modeling, text generation, question answering, and more. The model's capabilities are similar to other LLaMA models, but with the added benefits of the LORA (Low-Rank Adaptation) fine-tuning approach. What can I use it for? llama-13b-lora can be used for a variety of natural language processing tasks, such as: Generating creative content like stories, articles, or poetry Answering questions and providing information on a wide range of topics Assisting with tasks like research, analysis, and brainstorming Helping with language learning and translation Powering conversational interfaces and chatbots Companies and individuals can potentially monetize llama-13b-lora by incorporating it into their products and services, such as Replicate's own offerings. Things to try With llama-13b-lora, you can experiment with different input prompts and model parameters to see how they affect the generated text. For example, you can try adjusting the temperature to create more or less random outputs, or the repetition penalty to control how much the model repeats words or phrases. Additionally, you can explore using the model for specific tasks like summarization, question answering, or creative writing to see how it performs.

Updated Invalid Date