photomaker-style

Maintainer: tencentarc

359

| Property | Value |

|---|---|

| Model Link | View on Replicate |

| API Spec | View on Replicate |

| Github Link | View on Github |

| Paper Link | View on Arxiv |

Get summaries of the top AI models delivered straight to your inbox:

Model overview

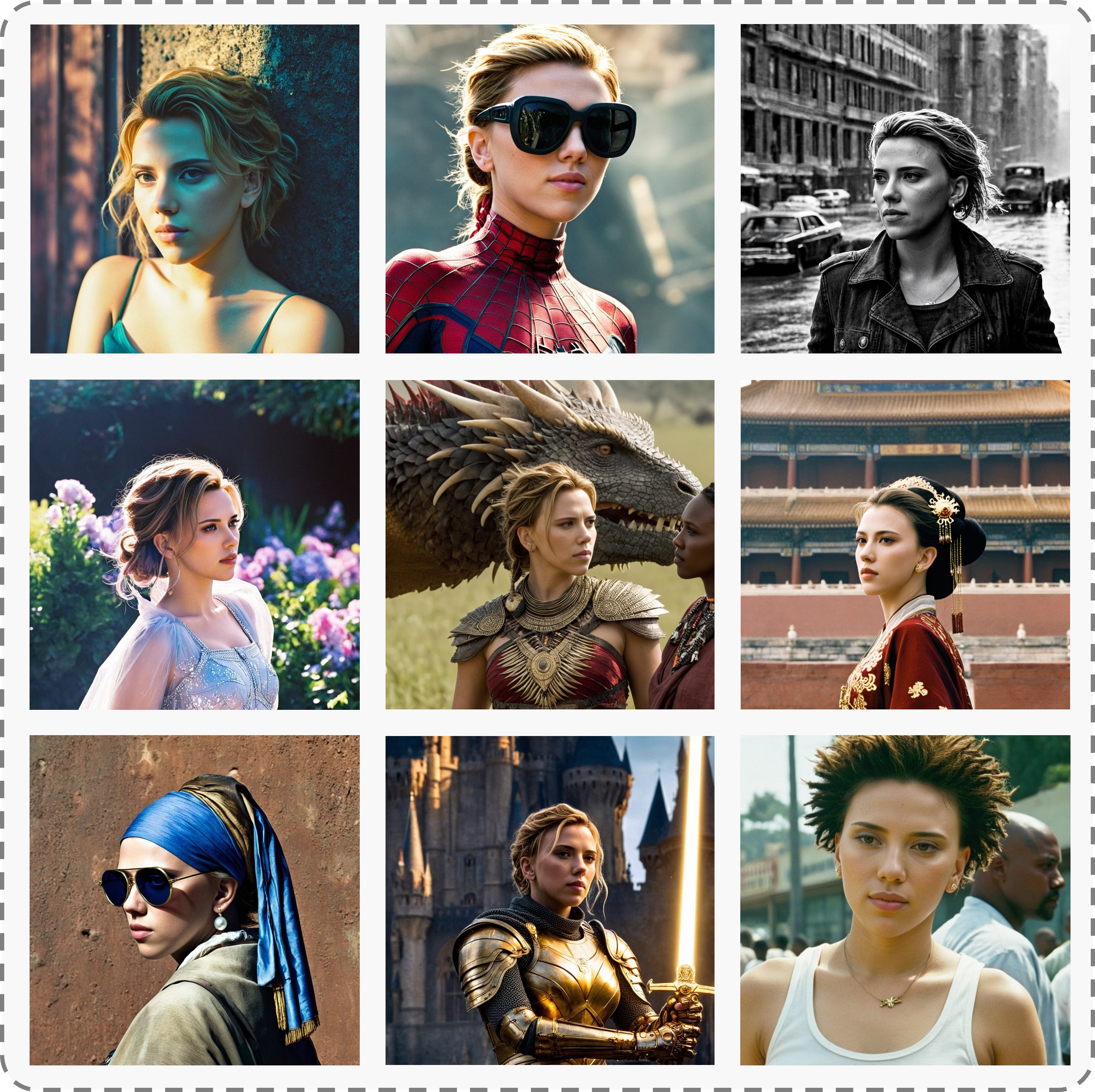

photomaker-style is an AI model created by Tencent ARC Lab that can customize realistic human photos in various artistic styles. It builds upon the base Stable Diffusion XL model and adds a stacked ID embedding module for high-fidelity face personalization. Compared to similar models like GFPGAN for face restoration or the original PhotoMaker for realistic photo generation, photomaker-style specializes in applying artistic styles to personalized human faces. It can quickly generate photos, paintings, and avatars in diverse styles within seconds.

Model inputs and outputs

photomaker-style takes in one or more face photos of the person to be customized, along with a text prompt describing the desired style and appearance. The model then outputs a set of customized images in the requested style, preserving the identity of the input face.

Inputs

- Input Image(s): One or more face photos of the person to be customized

- Prompt: Text prompt describing the desired style and appearance, e.g. "a photo of a woman img in the style of Vincent Van Gogh"

- Negative Prompt: Text prompt describing undesired elements to avoid in the output

- Seed: Optional integer seed value for reproducible generation

- Guidance Scale: Strength of the text-to-image guidance

- Style Strength Ratio: Strength of the artistic style application

Outputs

- Customized Images: Set of images generated in the requested style, preserving the identity of the input face

Capabilities

photomaker-style can rapidly generate personalized images in diverse artistic styles, from photorealistic portraits to impressionistic paintings and stylized avatars. By leveraging the Stable Diffusion XL backbone and its stacked ID embedding module, the model ensures impressive identity fidelity while offering versatile text controllability and high-quality generation.

What can I use it for?

photomaker-style can be a powerful tool for quickly creating custom profile pictures, avatars, or artistic renditions of oneself or others. It could be used by individual users, content creators, or even businesses to generate personalized images for a variety of applications, such as social media, virtual events, or even product packaging and marketing. The ability to seamlessly blend identity and artistic style opens up new possibilities for self-expression, creative projects, and unique visual content.

Things to try

Experiment with different input face photos and prompts to see how photomaker-style can transform them into diverse artistic interpretations. Try out various styles like impressionism, expressionism, or surrealism. You can also combine photomaker-style with other LoRA modules or base models to explore even more creative possibilities. Additionally, consider using photomaker-style as an adapter to collaborate with other models in your projects, leveraging its powerful face personalization capabilities.

This summary was produced with help from an AI and may contain inaccuracies - check out the links to read the original source documents!

Related Models

gfpgan

74.0K

gfpgan is a practical face restoration algorithm developed by the Tencent ARC team. It leverages the rich and diverse priors encapsulated in a pre-trained face GAN (such as StyleGAN2) to perform blind face restoration on old photos or AI-generated faces. This approach contrasts with similar models like Real-ESRGAN, which focuses on general image restoration, or PyTorch-AnimeGAN, which specializes in anime-style photo animation. Model inputs and outputs gfpgan takes an input image and rescales it by a specified factor, typically 2x. The model can handle a variety of face images, from low-quality old photos to high-quality AI-generated faces. Inputs Img**: The input image to be restored Scale**: The factor by which to rescale the output image (default is 2) Version**: The gfpgan model version to use (v1.3 for better quality, v1.4 for more details and better identity) Outputs Output**: The restored face image Capabilities gfpgan can effectively restore a wide range of face images, from old, low-quality photos to high-quality AI-generated faces. It is able to recover fine details, fix blemishes, and enhance the overall appearance of the face while preserving the original identity. What can I use it for? You can use gfpgan to restore old family photos, enhance AI-generated portraits, or breathe new life into low-quality images of faces. The model's capabilities make it a valuable tool for photographers, digital artists, and anyone looking to improve the quality of their facial images. Additionally, the maintainer tencentarc offers an online demo on Replicate, allowing you to try the model without setting up the local environment. Things to try Experiment with different input images, varying the scale and version parameters, to see how gfpgan can transform low-quality or damaged face images into high-quality, detailed portraits. You can also try combining gfpgan with other models like Real-ESRGAN to enhance the background and non-facial regions of the image.

Updated Invalid Date

gfpgan

6.1K

gfpgan is a practical face restoration algorithm developed by Tencent ARC, aimed at restoring old photos or AI-generated faces. It leverages rich and diverse priors encapsulated in a pretrained face GAN (such as StyleGAN2) for blind face restoration. This approach is contrasted with similar models like Codeformer which also focus on robust face restoration, and upscaler which aims for general image restoration, while ESRGAN specializes in image super-resolution and GPEN focuses on blind face restoration in the wild. Model inputs and outputs gfpgan takes in an image as input and outputs a restored version of that image, with the faces improved in quality and detail. The model supports upscaling the image by a specified factor. Inputs img**: The input image to be restored Outputs Output**: The restored image with improved face quality and detail Capabilities gfpgan can effectively restore old or low-quality photos, as well as faces in AI-generated images. It leverages a pretrained face GAN to inject realistic facial features and details, resulting in natural-looking face restoration. The model can handle a variety of face poses, occlusions, and image degradations. What can I use it for? gfpgan can be used for a range of applications involving face restoration, such as improving old family photos, enhancing AI-generated avatars or characters, and restoring low-quality images from social media. The model's ability to preserve identity and produce natural-looking results makes it suitable for both personal and commercial use cases. Things to try Experiment with different input image qualities and upscaling factors to see how gfpgan handles a variety of restoration scenarios. You can also try combining gfpgan with other models like Real-ESRGAN to enhance the non-face regions of the image for a more comprehensive restoration.

Updated Invalid Date

photomaker

1.4K

PhotoMaker is a text-to-image AI model developed by TencentARC that allows users to input one or a few face photos along with a text prompt to receive a customized photo or painting within seconds. The model can be adapted to any base model based on SDXL or used in conjunction with other LoRA modules. PhotoMaker produces both realistic and stylized results, as shown in the examples on the project page. Similar models include photomaker, GFPGAN, and PixArt-XL-2-1024-MS. Model inputs and outputs PhotoMaker takes one or more face photos and a text prompt as input, and generates a customized photo or painting as output. The model is capable of producing both realistic and stylized results, allowing users to experiment with different artistic styles. Inputs Face photos**: One or more face photos that the model can use to generate the customized image. Text prompt**: A description of the desired image, which the model uses to generate the output. Outputs Customized photo/painting**: The generated image, which can be either a realistic photo or a stylized painting, depending on the input prompt. Capabilities PhotoMaker is capable of generating high-quality, customized images from face photos and text prompts. The model can produce both realistic and stylized results, allowing users to explore different artistic styles. For example, the model can generate images of a person in a specific pose or setting, or it can create paintings in the style of a particular artist. What can I use it for? PhotoMaker can be used for a variety of creative and artistic projects. For example, you could use the model to generate personalized portraits, create concept art for a story or game, or experiment with different artistic styles. The model could also be integrated into educational or creative tools to help users express their ideas visually. Things to try One interesting thing to try with PhotoMaker is to experiment with different text prompts and see how the model responds. You could try prompts that combine specific details about the desired image with more abstract or creative language, or prompts that ask the model to mix different artistic styles. Additionally, you could try using the model in conjunction with other LoRA modules or fine-tuning it on different datasets to see how it performs in different contexts.

Updated Invalid Date

stable-diffusion

107.9K

Stable Diffusion is a latent text-to-image diffusion model capable of generating photo-realistic images given any text input. Developed by Stability AI, it is an impressive AI model that can create stunning visuals from simple text prompts. The model has several versions, with each newer version being trained for longer and producing higher-quality images than the previous ones. The main advantage of Stable Diffusion is its ability to generate highly detailed and realistic images from a wide range of textual descriptions. This makes it a powerful tool for creative applications, allowing users to visualize their ideas and concepts in a photorealistic way. The model has been trained on a large and diverse dataset, enabling it to handle a broad spectrum of subjects and styles. Model inputs and outputs Inputs Prompt**: The text prompt that describes the desired image. This can be a simple description or a more detailed, creative prompt. Seed**: An optional random seed value to control the randomness of the image generation process. Width and Height**: The desired dimensions of the generated image, which must be multiples of 64. Scheduler**: The algorithm used to generate the image, with options like DPMSolverMultistep. Num Outputs**: The number of images to generate (up to 4). Guidance Scale**: The scale for classifier-free guidance, which controls the trade-off between image quality and faithfulness to the input prompt. Negative Prompt**: Text that specifies things the model should avoid including in the generated image. Num Inference Steps**: The number of denoising steps to perform during the image generation process. Outputs Array of image URLs**: The generated images are returned as an array of URLs pointing to the created images. Capabilities Stable Diffusion is capable of generating a wide variety of photorealistic images from text prompts. It can create images of people, animals, landscapes, architecture, and more, with a high level of detail and accuracy. The model is particularly skilled at rendering complex scenes and capturing the essence of the input prompt. One of the key strengths of Stable Diffusion is its ability to handle diverse prompts, from simple descriptions to more creative and imaginative ideas. The model can generate images of fantastical creatures, surreal landscapes, and even abstract concepts with impressive results. What can I use it for? Stable Diffusion can be used for a variety of creative applications, such as: Visualizing ideas and concepts for art, design, or storytelling Generating images for use in marketing, advertising, or social media Aiding in the development of games, movies, or other visual media Exploring and experimenting with new ideas and artistic styles The model's versatility and high-quality output make it a valuable tool for anyone looking to bring their ideas to life through visual art. By combining the power of AI with human creativity, Stable Diffusion opens up new possibilities for visual expression and innovation. Things to try One interesting aspect of Stable Diffusion is its ability to generate images with a high level of detail and realism. Users can experiment with prompts that combine specific elements, such as "a steam-powered robot exploring a lush, alien jungle," to see how the model handles complex and imaginative scenes. Additionally, the model's support for different image sizes and resolutions allows users to explore the limits of its capabilities. By generating images at various scales, users can see how the model handles the level of detail and complexity required for different use cases, such as high-resolution artwork or smaller social media graphics. Overall, Stable Diffusion is a powerful and versatile AI model that offers endless possibilities for creative expression and exploration. By experimenting with different prompts, settings, and output formats, users can unlock the full potential of this cutting-edge text-to-image technology.

Updated Invalid Date